Skydancer Field Station

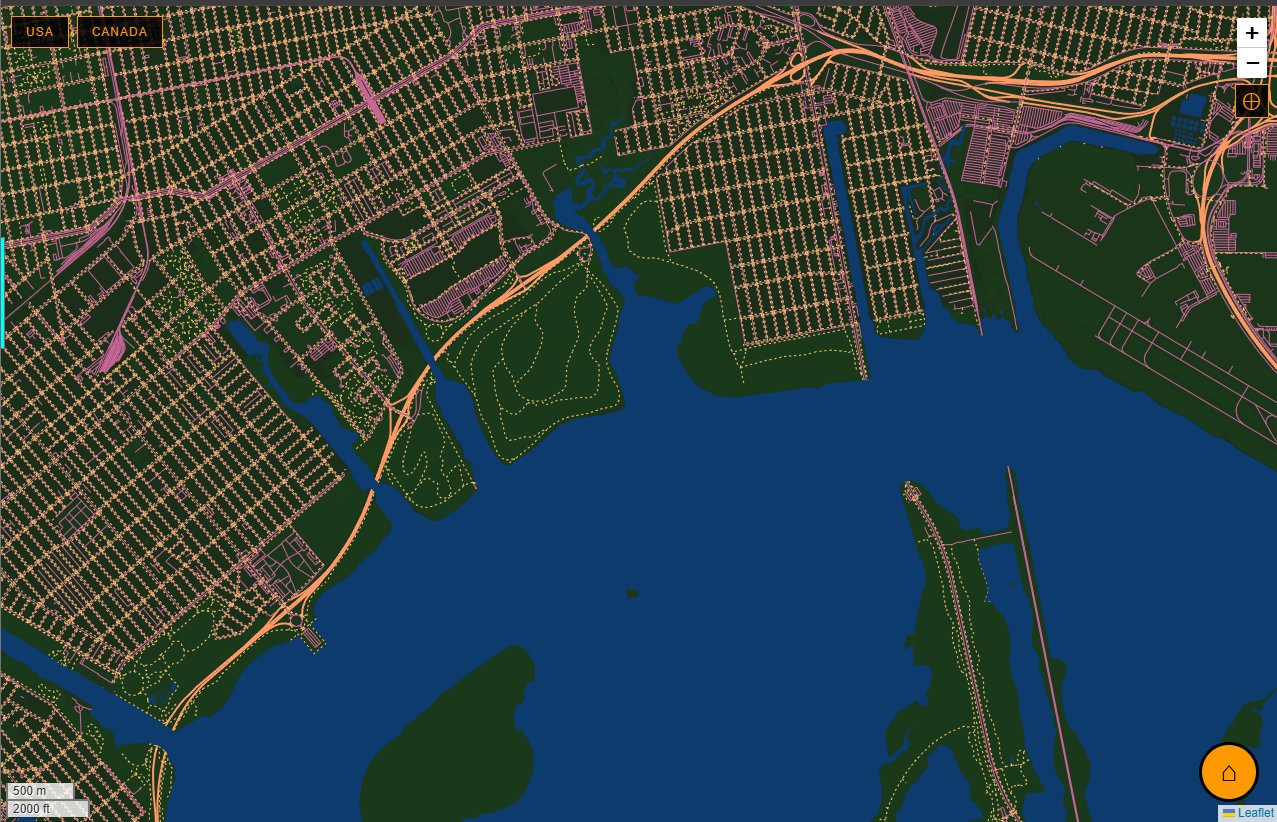

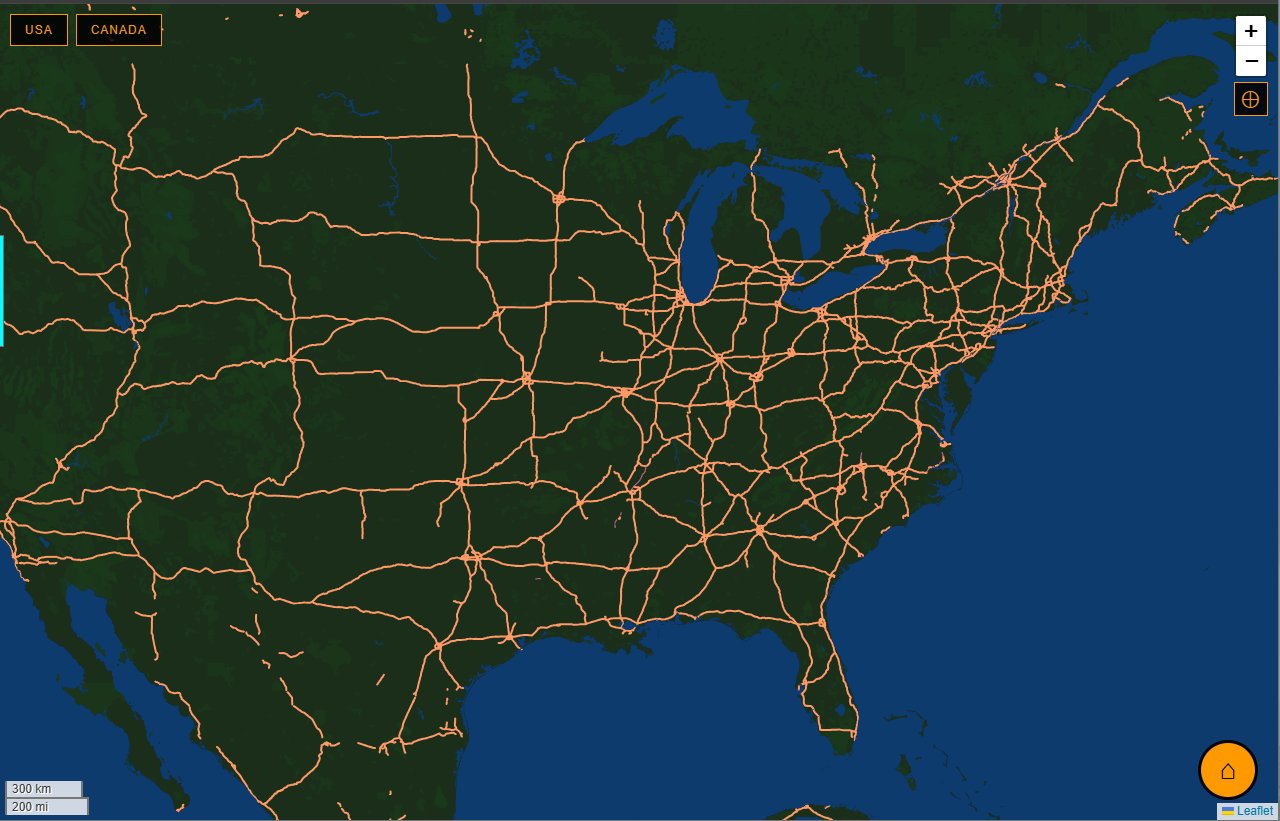

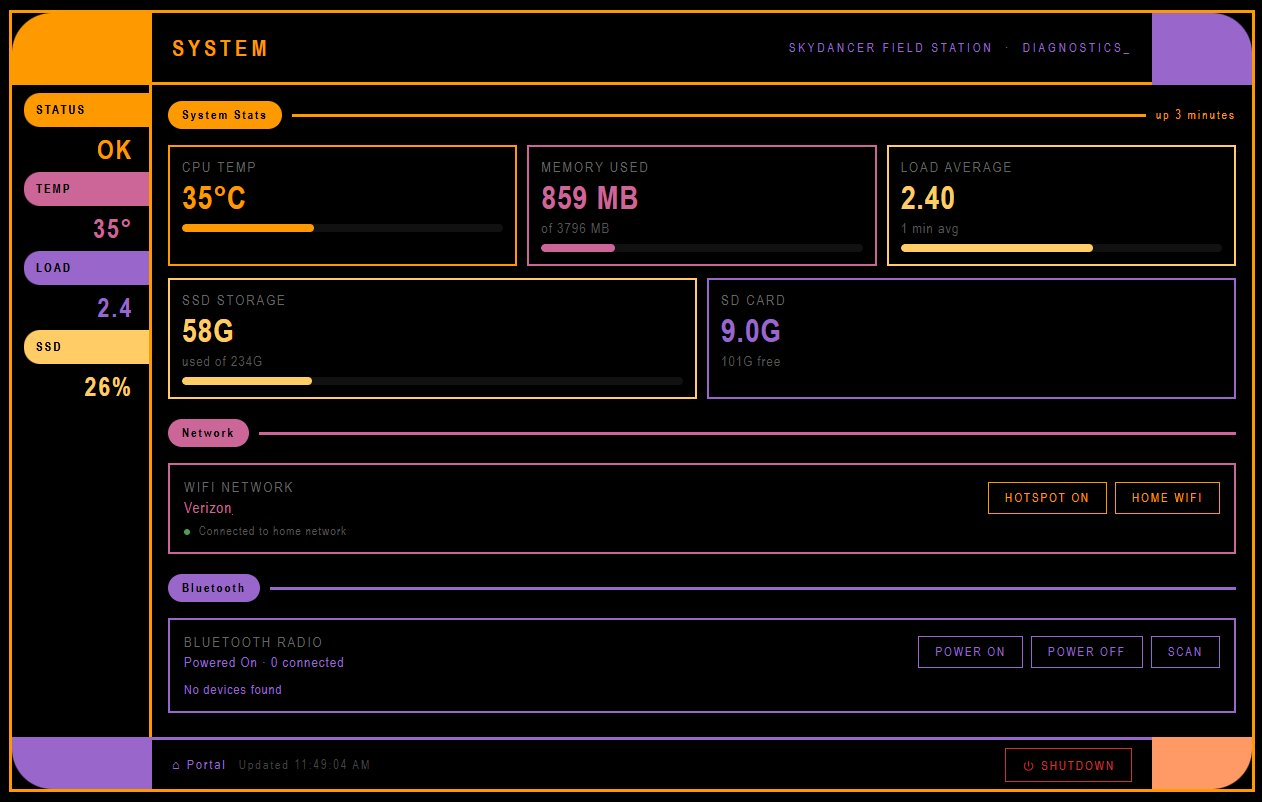

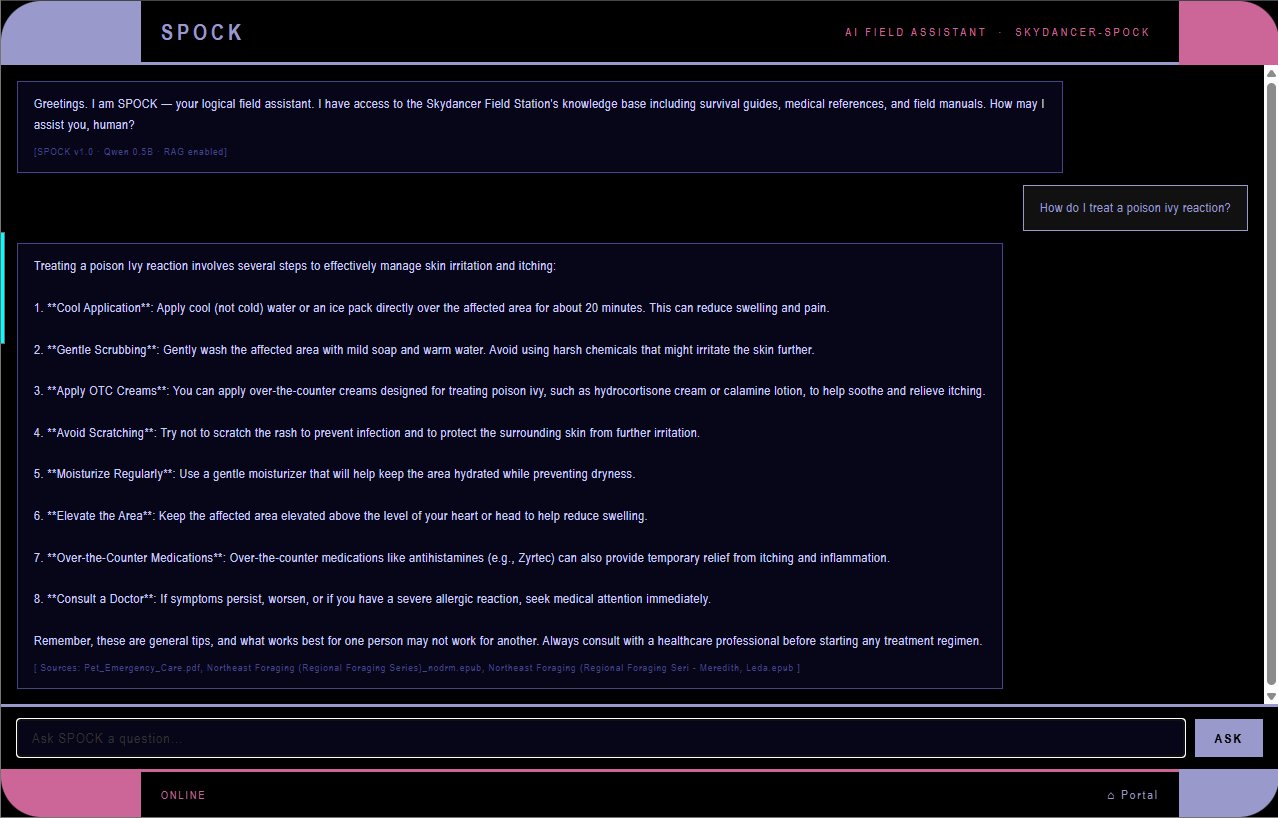

A self-contained, offline-accessible intranet built on Raspberry Pi 4 for travel and off-grid use. Provides offline maps, knowledge bases, media library, note-taking, and astronomical data without internet connectivity.

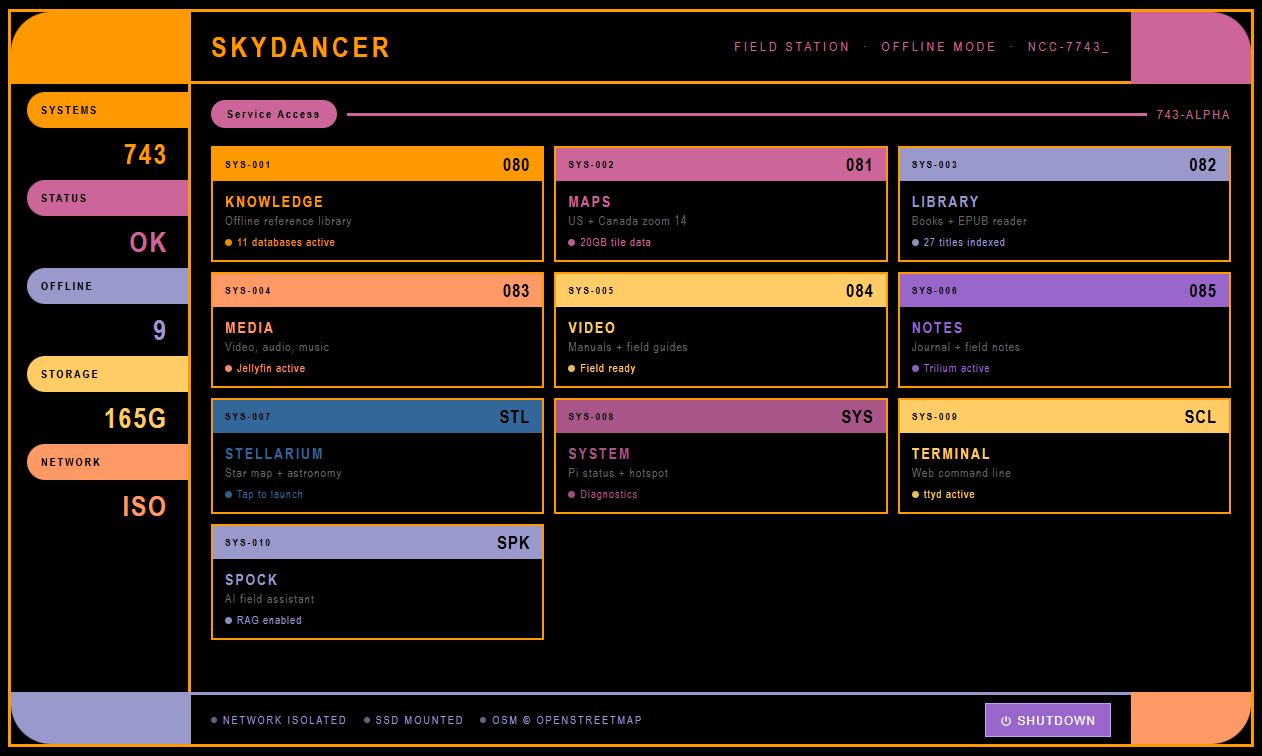

LCARS-themed portal homepage showing all field station services